What you will learn in this part:

- The data required to train our speech synthesis model

- The toolkit for training, and performing inference with, our model

- Working with a compute cluster:

- debugging and testing using an interactive session

- submitting your first substantial job

- monitoring training progress

Any errors you encounter during this part will be due to human error (yours!) and not the tools or the data. You must successfully complete this part before trying it with your own data.

Do not start this part of the assignment until we tell you to!

Existing speech corpora available to you

To save disk space (which is expensive and costs real money), you must use a shared copy of the existing data. Do not make your own copy of it! Take a look at the shared copy, using an Eddie login node:

# Shared dataset directory

DATA_DIR=/exports/chss/eddie/ppls/groups/slpgpustorage/tts_cw

# Arctic dataset

# SLT speaker, American, female

ls ${DATA_DIR}/cmu_us_slt_arctic/wav

less ${DATA_DIR}/cmu_us_slt_arctic/etc/txt.done.data

# There are other speakers: replace slt with bdl, rms, or clb

# LJ Speech dataset

ls ${DATA_DIR}/LJSpeech-1.1/wavs

less ${DATA_DIR}/LJSpeech-1.1/metadata.csv

Set up your environment

Do the following once, to set up your environment:

# Shared dataset directory

DATA_DIR=/exports/chss/eddie/ppls/groups/slpgpustorage/tts_cw

# Load anaconda

module load anaconda

# Set up the environment path - You only need to run the following commands once.

conda config --add envs_dirs ${DATA_DIR}/anaconda/envs

conda config --add pkgs_dirs ${DATA_DIR}/anaconda/pkgs

# Activate the environment

conda activate py312torch27cuda118

Then, next time you login, all you need to do is:

module load anaconda && conda activate py312torch27cuda118

You should see (py312torch27cuda118) at the start of your shell prompt, to remind you that you have activated this environment. You need to have this environment activated throughout this assignment. (If for some reason you want to deactivate it, just conda deactivate)

Create a new EveryVoice project

EveryVoice is a toolkit for training a model similar to FastSpeech 2. It is freely available on GitHub and has documentation. Do not install your own copy from GitHub! Use the shared copy that is already on Eddie.

# if you didn't already do this in the current session, activate your environment:

module load anaconda && conda activate py312torch27cuda118

# Make sure you are in the right directory

EXP_DIR=/exports/chss/eddie/ppls/groups/slpgpustorage/users/s1234567/tts_cw

mkdir ${EXP_DIR}

cd ${EXP_DIR}

# Start the configuration wizard

everyvoice new-project

The wizard will guide you through the process by asking you several questions. The first time you train a model, you will use data from a single speaker (pick one from the available ARCTIC corpora) Here are the answers to some of the questions (the rest should be obvious). To abort or go back one step, press Ctrl-C.

- Where should the Configuration Wizard save your files?

- Current directory, specified using “.”

- Where is your data filelist?

- Type “/” and then use the menus to browse to: /exports/chss/eddie/ppls/groups/slpgpustorage/tts_cw/cmu_us_slt_arctic/etc/txt.done.data (or whichever ARCTIC speaker you have chosen to use)

- Select which format your filelist is in: festival

- Which representation is your text in? characters

- Which of the following text transformations would like to apply to your dataset’s characters?

- press “space” to select Lowercase and NFC Normalization

- Would you like to specify an alternative speaker ID for this dataset instead? no

- language: eng

- Keep the current g2p settings and continue

- Where are your audio files?

- Browse to: /exports/chss/eddie/ppls/groups/slpgpustorage/tts_cw/cmu_us_slt_arctic/wav/

- Which of the following audio preprocessing options would you like to apply?

- select only Normalization (-3.0dB)

- Which format would you like to output the configuration to? yaml

Using multiple datasets to create a multi-speaker (or multi-style) model

Don’t do this for your first model, but you will need this information when training a model on your own recordings.

Training a model on multiple datasets is very easy – just follow the Wizard! For example, to train a 2-speaker model on data from the ARCTIC speaker ‘slt’ and your own recordings:

- Would you like to specify an alternative speaker ID for this dataset instead? yes

- Please enter the desired speaker ID: slt

- …

- Do you have more datasets to process? yes

- …

- Please enter the desired speaker ID: Simon

- …

- Do you have more datasets to process? no

The train a multi-style model, simply treat each speaker+style combination as a unique speaker (e.g., ‘slt’, ‘Simon_reading’, ‘Simon_conversational’).

There are other ways to achieve the same result, which involve creating a filelist that has a speaker ID column. Ask for help in the lab if you want to learn how to do it that way. You will need to use this approach if you only want to use part of an existing corpus (e.g., only the ‘B’ utterances from slt ARCTIC).

Preprocess the data

# Start an interative CPU session with 4 CPU cores for 2 hours.

qlogin -pe interactivemem 4 -l h_rt=2:00:00

# In the unlikely event that you experience a long waiting time,

# trying adding -P ppls_ssgpu to use our priority job code

# (this costs money, so only use when necessary)

# Activate your environment

module load anaconda && conda activate py312torch27cuda118

# Set the paths

EXP_DIR=/exports/chss/eddie/ppls/groups/slpgpustorage/users/s1234567/tts_cw

# Put the project name you have entered in the configuration wizard here.

TTS_PROJECT=your_project_name

# Go to the project directory

cd ${EXP_DIR}/${TTS_PROJECT}

# Start the preprocessing

everyvoice preprocess config/everyvoice-text-to-spec.yaml

# This should be quite fast - under 3 minutes for 'slt'

# (Ignore any warnings about 'pkg_resources')

# IMPORTANT!

# as soon as you have finished this step, quit the interactive session

# the cluster has a limited number of interactive nodes,

# and other people might be waiting to use one

# Tip: Ctrl+D is the quickest way to log out

# Otherwise, type this:

logout

You should now be back on a login node. In a terminal, or using VS Code, browse through the contents of ${EXP_DIR}/${TTS_PROJECT}/preprocessed to see what files have been created.

Train the model

Edit line 60 of config/everyvoice-text-to-spec.yaml to specify the vocoder we will be using (note: you cannot use environment variables in this config file):

vocoder_path: /exports/chss/eddie/ppls/groups/slpgpustorage/tts_cw/hifigan_universal_v1_everyvoice.ckpt

Make your own copy of the training job submission script:

DATA_DIR=/exports/chss/eddie/ppls/groups/slpgpustorage/tts_cw

EXP_DIR=/exports/chss/eddie/ppls/groups/slpgpustorage/users/s1234567/tts_cw

TTS_PROJECT=your_project_name

cd ${EXP_DIR}/${TTS_PROJECT}

cp ${DATA_DIR}/train.sh .

modify train.sh to specify

- a maximum run time

- the priority job code

- a meaningful job name (of your choice)

- your University email address

#!/bin/bash # Grid Engine options (lines prefixed with #$) # Runtime limit of 6 hours: #$ -l h_rt=6:00:00 # # Set working directory to the directory where the job is submitted from: #$ -cwd # # Request one GPU # IMPORTANT: do not use more than one GPU ! # it will burn through your available GPU hours faster #$ -q gpu #$ -l gpu=1 # # Specify a priority project for shorter queue time: #$ -P ppls_ssgpu # # Name your job so you can easily find it: #$ -N my_meaningful_job_name # # Specify an email address to get job notifications: #$ -M s1234567@ed.ac.uk # Initialise the environment modules . /etc/profile.d/modules.sh module load anaconda conda activate py312torch27cuda118 # Train the model everyvoice train text-to-spec config/everyvoice-text-to-spec.yaml

Now we can submit this job to the scheduler

qsub train.sh

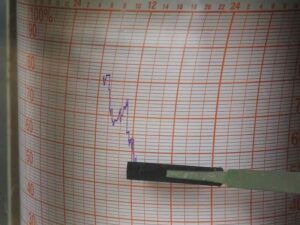

Use the skills you learned earlier to monitor this job. You should be able to:

- Examine the queue to see whether the job is still waiting or has started running

- Find the output files and inspect their contents

For an ARCTIC corpus comprising approx. 1100 sentences and approx. 1 hour of speech, training for 1000 epochs should take less than 3 hours.